TL;DR: But B2B product catalogs are structurally different. The complexity is not just more SKUs — it is a different kind of complexity entirely.

B2B ecommerce has a product data problem that most PIM conversations underserve.

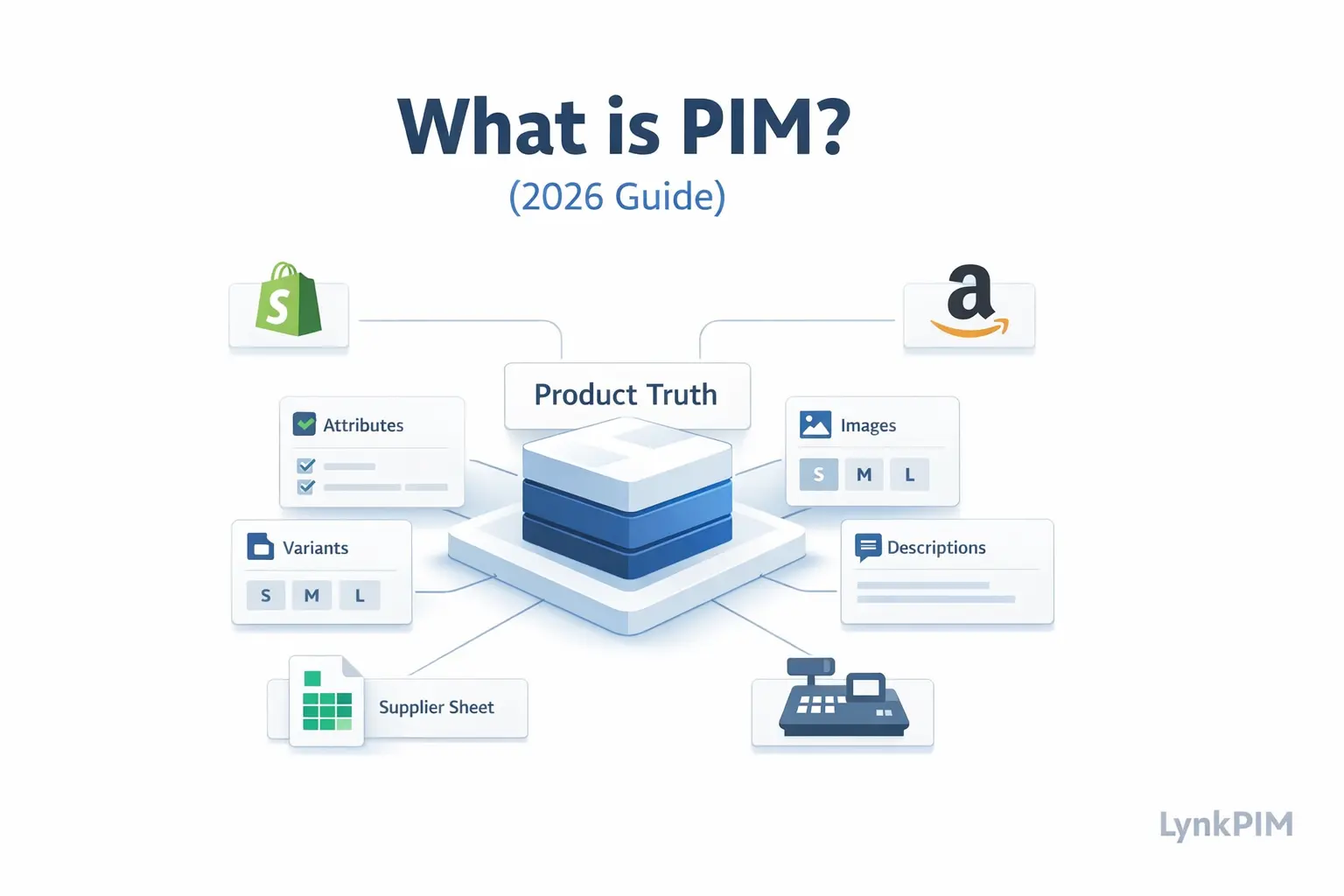

The standard PIM use case described in most guides is implicitly B2C:

a brand managing product descriptions, images, and channel syndication across Shopify, Amazon, and Google Shopping. That use case is real and well-documented.

But B2B product catalogs are structurally different. The complexity is not just more SKUs — it is a different kind of complexity entirely. Technical specifications run deeper. Variant logic is tied to engineering constraints, not just color and size. Buyers may see different prices, different product sets, and different attribute views depending on their account tier, geography, or contract terms.

Managing this well without a PIM is possible at small scale. At any meaningful catalog size, it becomes operationally fragile very quickly.

This guide covers how PIM functions specifically in B2B ecommerce contexts — what it handles, where it makes the biggest operational difference, and what to look for when evaluating whether a PIM is genuinely built for B2B workflows.

How B2B product catalog complexity differs from B2C

The most useful starting point is understanding where B2B catalog management diverges structurally from B2C, not just in scale but in kind.

Technical attribute depth

B2C products typically need a moderate set of commercial attributes: name, description, dimensions, material, color, size, price, images. The goal is to help a consumer understand and buy a product.

B2B products often require a much deeper attribute layer. A single industrial component might need:

- Material grade and alloy composition

- Tolerance specifications and load ratings

- Certifications and compliance standards (ISO, CE, RoHS, UL)

- Compatibility matrices with other product families

- Operating condition ranges (temperature, voltage, pressure)

- Packaging configurations (unit, case, pallet)

- Lead time and minimum order quantity by supplier or region

- Regulatory documentation references

These attributes are not decorative. They are decision-critical. A buyer

specifying components for a manufacturing process needs this data to be accurate, complete, and structured — not buried in a PDF or approximated in a text description.

When this data lives in spreadsheets, supplier emails, and legacy ERP exports, the operational cost of keeping it accurate and channel-ready is enormous.

Variant logic tied to configuration, not presentation

B2C variants are largely presentational: a shirt comes in blue, red, and green, in sizes S through XL. The variant logic is straightforward.

B2B variants are often configurable or modular. A single parent product might have variants determined by:

- Material specification choices that affect compliance certifications

- Dimensional combinations that require different packaging

- Voltage or frequency configurations for different geographic markets

- Custom assembly options that alter the bill of materials

- OEM-specific part number mappings

This means variant relationships in B2B catalogs can be far more complex than parent-child structures built for apparel. The variant model needs to capture not just what is different between SKUs, but what those differences mean for downstream systems, documentation, and channel outputs.

Buyer-specific catalog visibility

In B2B ecommerce, not every buyer sees the same catalog. Depending on the business model, different buyer groups may have:

- Access to different product sets (a distributor sees a different range

than a direct buyer) - Negotiated prices that should not be visible to other buyers

- Custom product configurations or private-label variants

- Different required attributes based on their industry or geography

- Localized documentation sets relevant to their regulatory environment

This buyer-specificity is not a personalization feature layered on top of a

standard catalog. It is a structural characteristic of how B2B commerce works.

The product data layer needs to support it natively.

Multi-channel distribution with very different format requirements

B2B products reach buyers through a wider variety of channels than most B2C catalogs. Alongside a direct ecommerce storefront, B2B teams typically need to distribute product data to:

- Distributor portals and partner catalogs

- Procurement platforms (Ariba, Coupa, trade-specific platforms)

- Print and digital product catalogs for sales teams

- ERP integrations at buyer organizations

- Industry data standards formats (ETIM, BMEcat, UNSPSC, GS1)

- OEM and private-label partner systems

Each of these channels has different format requirements, different mandatory fields, and different data structures. Managing separate data sets for each channel manually is where B2B product operations typically break down.

Where PIM makes the biggest operational difference in B2B

Given that context, the value of a PIM in B2B is not primarily about making product pages look better. It is about making complex product data operationally manageable.

Centralized technical attribute management

A PIM provides a single place to define, govern, and maintain the deep

technical attribute sets that B2B products require.

Instead of technical specs living in ERP fields that were never designed for content management, in supplier PDFs that need manual extraction, or in engineering spreadsheets that only one person can interpret, a PIM makes technical attributes:

- Structured with defined field types (numeric with units, enumerated lists,

boolean flags, document references) - Governed with validation rules that catch missing or out-of-range values

- Searchable and filterable across the full catalog

- Exportable in the format each downstream channel requires

This matters most during product launches, when engineering documentation needs to become commercial product data quickly, and during product updates, when a spec change needs to propagate accurately across every channel and system simultaneously.

Variant modeling that reflects actual product relationships

For B2B catalog teams, a well-designed PIM variant model is one of the

highest-value configuration investments.

The goal is to define which attributes belong at the parent product level

(brand, product family, core compliance certifications), which attributes

define variant differentiation (material grade, configuration option,

dimensional specification), and which attributes are truly SKU-level

(barcode, specific part number, packaging unit).

When this model is clean, the catalog scales predictably. Adding a new

configuration option to a product family does not require duplicating every shared attribute. Updating a compliance certification at the parent level propagates correctly to all relevant variants. Channel exports pull the right data structure for each output.

When this model is weak or missing, B2B catalogs tend to accumulate flat SKU lists where every variant carries duplicated parent-level data,

inconsistencies are invisible until they cause channel errors, and any

structural change requires manual intervention across dozens or hundreds of records.

Approval workflows that reflect B2B governance requirements

B2B product data often has higher governance stakes than B2C. A wrong specification on a consumer product description is a customer service problem.

A wrong specification on an industrial component listing is potentially a

liability and compliance issue.

This means B2B catalog workflows typically need:

- Technical review by engineering or product management before

specifications are published - Legal or compliance review before certification claims are made

- Procurement or pricing team approval before commercial terms are visible

- Regional validation for market-specific regulatory requirements

A PIM with configurable approval workflows allows these review steps to be built into the publishing process, rather than handled through email chains and manual checklists that are easy to skip under deadline pressure.

Channel-specific output without maintaining separate data sets

The multi-channel distribution reality of B2B commerce is one of the strongest arguments for a centralized PIM.

Rather than maintaining separate spreadsheets for distributor portals, a

different export format for procurement platforms, and a manual process for updating the print catalog, a PIM allows the team to:

- Maintain one authoritative product record

- Define the transformation rules for each output format

- Validate completeness against channel-specific requirements before export

- Trigger syndication to multiple channels from a single approved source

This does not mean every channel receives identical data. Channel-specific fields, format requirements, and language variants are managed as output configurations on top of the core product record — not as separate data maintenance projects.

Buyer-specific catalogs: how PIM supports account-level product visibility

Buyer-specific catalog management is one of the most operationally complex requirements in B2B ecommerce, and it is worth addressing directly.

The challenge is this: the same physical product may need to appear differently to different buyers. A distributor may see a different price tier. A contract customer may have access to a private-label variant. A buyer in a regulated market may need to see additional compliance documentation that is not relevant in other regions.

There are two main ways a PIM supports this:

Catalog segmentation at the product level

Some PIM platforms support product visibility rules that determine which buyer groups or account segments can see which products. This is typically configured at the catalog or collection level, allowing different product sets to be assembled for different buyer tiers without duplicating product records.

This approach works well for buyer groups with genuinely different product access — where a distributor catalog is a meaningfully different subset of the full product range.

Attribute-level visibility and output rules

For cases where the same product is visible to multiple buyer groups but needs to show different attribute views — different pricing fields, different documentation sets, different specification emphasis — attribute-level visibility rules allow the same product record to produce different outputs for different channel or buyer contexts.

This is more granular than full catalog segmentation. It handles the common B2B scenario where the product is the same, but what each buyer needs to see about it is different.

In practice, many B2B operations use a combination of both approaches:

catalog segmentation to control product access, and attribute-level output rules to control what each buyer sees within their accessible catalog.

Industry data standards: what B2B PIM needs to support

One requirement that separates genuine B2B PIM capability from general-purpose product management tools is support for industry data standards.

B2B product data exchange relies on standardized formats that allow product information to flow between trading partners, procurement systems, and industry databases in structured, interoperable ways. The most common

include:

ETIM (Electrotechnical Information Model) — the dominant standard for

electrotechnical and installation products in European B2B markets. Defines product classes with standardized attributes and controlled value lists.

BMEcat — a widely used XML standard for electronic product catalog

exchange, common in German-speaking markets and industrial procurement.

UNSPSC (United Nations Standard Products and Services Code) — a global hierarchical classification system used in procurement and spend analysis.

GS1 standards — the global framework for product identification and

data exchange, including GTIN (Global Trade Item Number) and the GS1 Data Model for product attributes.

For B2B teams operating in industries where these standards are expected by trading partners, a PIM that cannot map to and export in these formats creates a significant integration burden.

When evaluating a PIM for B2B use, confirming support for the specific

standards relevant to your industry and trading partner network should be an early-stage requirement, not a late-stage discovery.

Practical checklist: is your current B2B product data operation at risk?

The following signals indicate that B2B product data management has scaled past what spreadsheets and manual processes can reliably handle:

- Technical specifications for the same product are inconsistent across

distributor portal, direct ecommerce site, and internal sales tools - Updating a compliance certification requires manual changes across

multiple systems and files - Variant relationships are represented as flat SKU lists where every

record carries duplicated parent-level data - Channel export preparation requires a dedicated person pulling data

from multiple sources before each submission - Buyer-specific pricing or visibility is managed through separate

spreadsheet versions of the product catalog - New product launches consistently run late because data preparation is a bottleneck

- Industry standard format submissions (ETIM, BMEcat, GS1) require

significant manual reformatting from internal data - There is no clear workflow for engineering or compliance review of

product specifications before they are published - The team cannot answer confidently: which version of this product

record is the current approved version?

If more than three of these apply, the operational risk from unmanaged

product data complexity is already affecting time-to-market, channel

accuracy, and team capacity.

What to look for in a PIM built for B2B

Not every PIM is designed to handle B2B catalog complexity. When evaluating options specifically for B2B use cases, these capabilities matter most:

Deep attribute modeling — support for technical field types (numeric

with units, range values, multi-value attributes, document references),

not just text and image fields optimized for consumer product descriptions.

Flexible variant architecture — ability to define parent-child-variant

relationships that reflect actual product configuration logic, not just

presentational color-size grids.

Configurable approval workflows — multi-step review processes with

role-based permissions, so technical, compliance, and commercial review can be built into the publishing path.

Buyer-specific catalog support — either through catalog segmentation,

attribute visibility rules, or both, depending on the business model.

Industry standard format export — native or configurable support for

relevant B2B data standards, not just CSV and generic JSON outputs.

API-first architecture — B2B operations typically involve more

system-to-system integrations than B2C. A PIM that exposes a well-documented API makes it significantly easier to connect to ERP systems, procurement platforms, and distributor portals without bespoke integration projects for each connection.

Audit logging and version history — in regulated industries, being

able to demonstrate what version of a specification was published and when is not a nice-to-have. It is a compliance requirement.

Summary

B2B product catalog management is not a scaled-up version of B2C product management. The structural differences — technical attribute depth, configuration-driven variant logic, buyer-specific visibility, multi-system distribution, industry data standards — create a genuinely different operational challenge.

A PIM built for this context makes technical attributes structured and

governable, variant relationships accurate and scalable, approval workflows rigorous enough to meet compliance requirements, and channel outputs manageable without maintaining separate data sets for each distribution path.

The teams that run into problems are almost always the ones who have scaled their catalog past what spreadsheets and manual coordination can handle, but have not yet put the infrastructure in place to manage product data as a governed operational system.

The inflection point usually comes at a combination of catalog size,

channel count, and team involvement — not any single threshold. But when it comes, the cost of continuing without structure typically exceeds the cost of fixing the data model by a significant margin.

Frequently asked questions

Is a PIM different for B2B versus B2C?

The core function — centralizing, governing, and distributing product data — is the same. But B2B use cases require deeper technical attribute modeling, more complex variant architecture, configurable approval workflows, buyer-specific catalog support, and often compatibility with industry data standards that are rarely relevant in B2C contexts.

Can a standard ecommerce PIM handle B2B requirements?

Some can, with configuration. Others are built primarily for B2C commerce and lack the technical attribute depth, variant modeling flexibility, or industry standard export capabilities that B2B operations need. Evaluating specifically against B2B requirements — not just general feature lists — is essential.

How does a PIM handle buyer-specific pricing in B2B?

PIM is not a pricing engine, and pricing logic typically lives in an ERP

or commerce platform. However, a PIM can support buyer-specific catalog visibility — controlling which products or attributes are visible to which buyer segments — and can feed structured product data into commerce systems that handle buyer-specific pricing logic downstream.

What is the most common B2B product data failure mode?

Flat SKU lists without governed variant relationships. When every

configuration option is a separate record carrying all parent-level

attributes duplicated, the catalog becomes extremely difficult to maintain accurately. A spec change at the product family level requires updating dozens or hundreds of individual records. This is the most common source of inconsistency in B2B catalogs that have grown past spreadsheet scale.

When does a B2B team typically need a PIM?

Usually when a combination of factors converges: catalog size past a few hundred SKUs with significant variant depth, multiple distribution channels with different format requirements, more than one team member responsible for product data, and/or industry standard format submission requirements from trading partners. Any one of these factors alone might be manageable. All of them together typically exceed what manual processes can handle reliably.

How does PIM connect to ERP in a B2B environment?

The typical model is that ERP owns operational data — inventory, pricing,

order management, procurement — and PIM owns product content and attributes.

ERP provides reference data (SKU codes, supplier identifiers, cost data)

that the PIM uses as anchors for product records. PIM provides structured, enriched product data that downstream commerce and distribution systems consume. The connection between them is usually via API or scheduled data sync, with clear ownership boundaries that prevent the two systems from overwriting each other’s data.